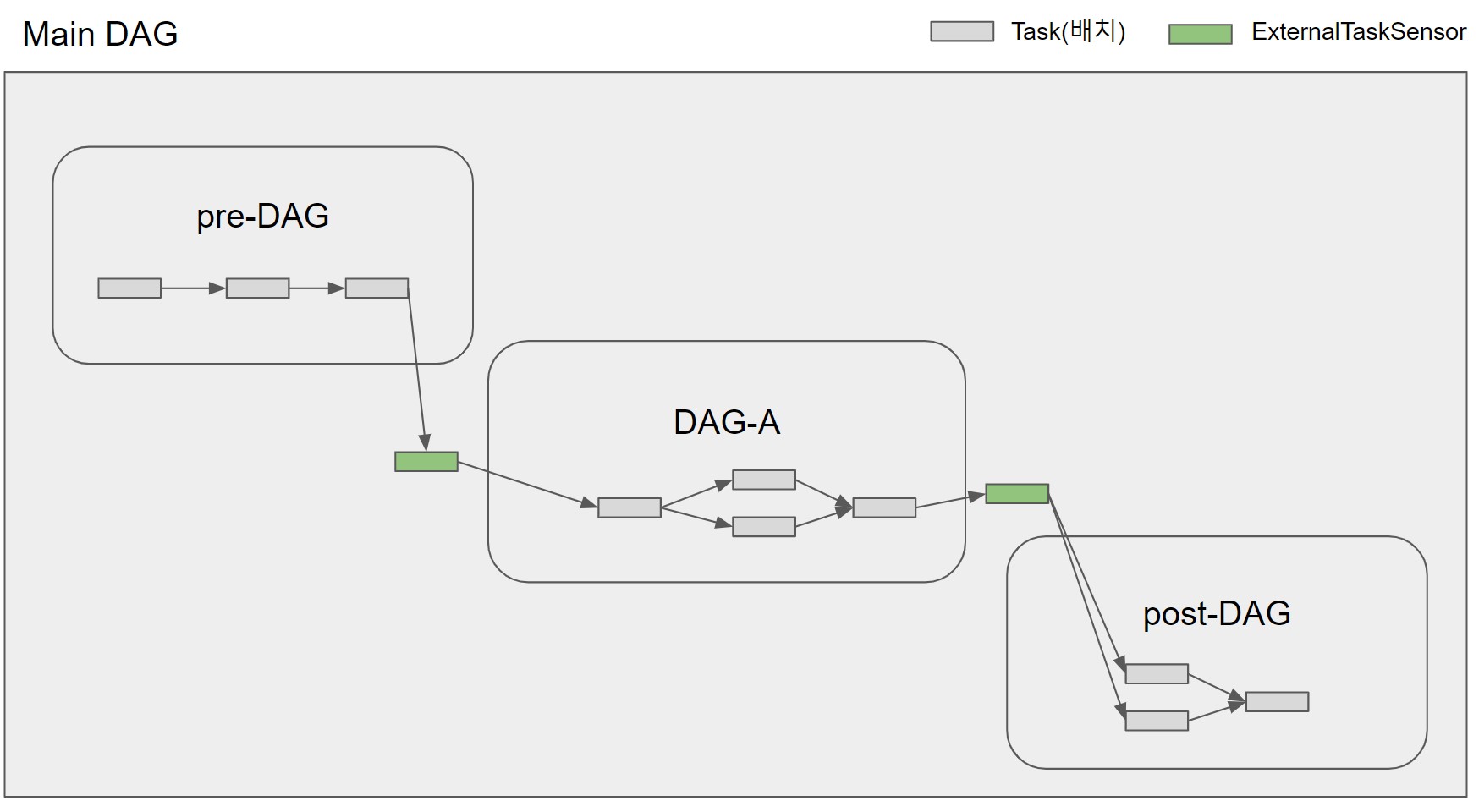

ECR - used to keep the Docker images which contain the DAGs.RDS, CloudWatch and SSM - used for password management, metadata storage and logging.Worker pods - execute the tasks and interact with the data stores.Webserver - what users interact with (i.e.High-level architecture of an Airflow on Kubernetes I implemented in AWS Importantly, you should understand these two key components: the scheduler and the metadata database. There are certain quirks with Airflow that are not obvious on the surface. While Airflow does provide a clean and easy-to-use UI, it is still important to understand how the various components work under-the-hood. Have common dependencies sit in a parent DAGĪirflow Best Practice #1 - Understand how the Airflow scheduler and metadata database work.Fully take advantage of the UI’s features.Take advantage of XCOM like a built-in audit database.Use common modules if they are shared between DAGs.Your top-level DAG code should be just the orchestrator.Use intermediate stages to store your data.Treat your tasks like transactions - they should be idempotent and atomic.Understand the metadata database and external connections.Understand the quirks of the Airflow Scheduler.

Understand how the Airflow scheduler and metadata database work.Important concept - Airflow is NOT a big data processing engine.Airflow workflows are ‘DAGs’ and 100% code.What is a Data Workflow and why it is hard to manage.Photo by Mike from Pexels Airflow is not an engine, but an orchestrator This fundamental concept underpins all the other best practices explored below. If you need to copy data from S3 into Snowflake, use Snowflake’s load function. For example, if you need to do transformations, use a Spark cluster. Therefore as best practice, you should look to offload as part of your data processing to other systems. In basic terms, you can think of Airflow like Azure Data Factory, but with pipelines completely written in code. However, it is not intended to replace big data processing engines, such as Apache Spark or Apache Druid. It is important to appreciate that Airflow plays the role of an excellent data workflow orchestrator. Important concept - Airflow is NOT a big data processing engine In a way, it combine sthe functions of a cronjob and a queuing/polling system.Īll DAGs/workflows are also 100% in code, meaning everything can be:

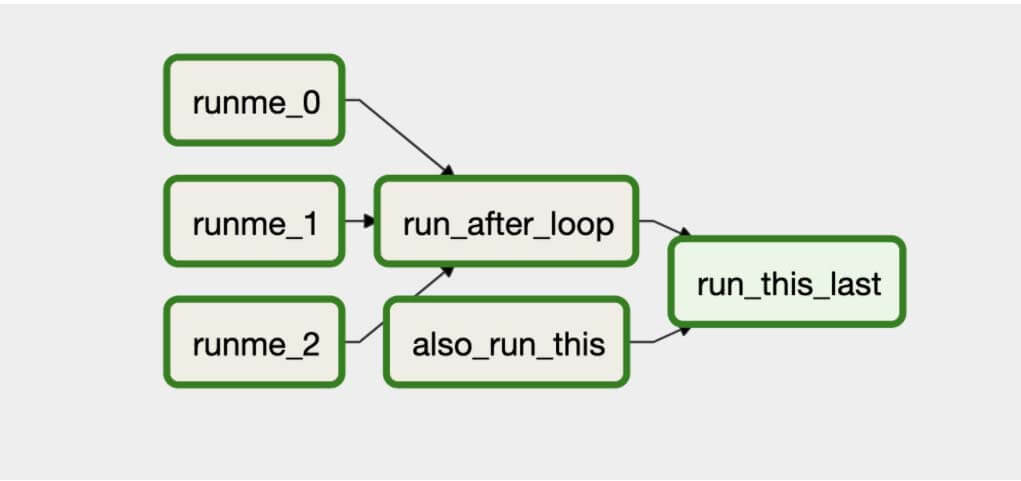

This is done by automatically handling retries, supporting exponential backoff, maximum retries and retry delays. event-driven) - for example, a sensor on a file system or S3 bucket to trigger when new files are loaded.įurthermore, it also has high availability, redundancy, error and retry handling. That is, there are 3 main ways a Airflow job can be triggered: The main function of Airflow is its ability to schedule workflow runs. It’s called DAG because the series of tasks only ‘flow’ one direction (and it doesn’t double back on itself).Įach ‘step’ of the workflow is known as a taskĪn example of an Airflow DAG looks like this: In Airflow, the technical name for data workflows is Directed Acrylic Graphs (DAGs). Monitor the status of each step in the workflowĪirflow workflows are ‘DAGs’ and 100% code This includes managing dependencies and task monitoring/management through its web UI:

So in this blog I’ll explore some best practices to hopefully make the Airflow experience less daunting for newcomers.Īirflow () is a leading Apache open-source data orchestration tool for running data workflows - used in many tech companies.įor example, Uber and Airflow’s original creator, Airbnb both use Airflow extensively.Īirflow provides an easy-to-use web UI, with integrated logging, monitoring, workflow run management, dependency handling. Due to its complex nature and inter-related steps, managing data workflows is often a daunting task. Machine Learning - training, evaluating and deploying a ML model.ĭata workflows range from simple to very complex, with hundreds of steps and branching/dependencies. What is a Data Workflow and why it is hard to manageĪ data workflow is a well-defined series of steps to accomplish a particular data-related task.ĭata pipelines - Extracting, Loading and Transforming (ELT) data from one data source to anotherĪnalytics Engineering - creating, calculating or building analytics or data warehouse models using SQL

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed